How We Cut Our LLM Costs 60% With Request Routing

A practical breakdown of how intelligent routing, caching, and model selection through our LangRouter can dramatically reduce your AI infrastructure costs.

Latest news and updates from LangRouter

A practical breakdown of how intelligent routing, caching, and model selection through our LangRouter can dramatically reduce your AI infrastructure costs.

Side-by-side pricing comparison of GPT-5, Claude Opus 4.6, and Gemini 2.5 Pro with real cost calculations for production workloads.

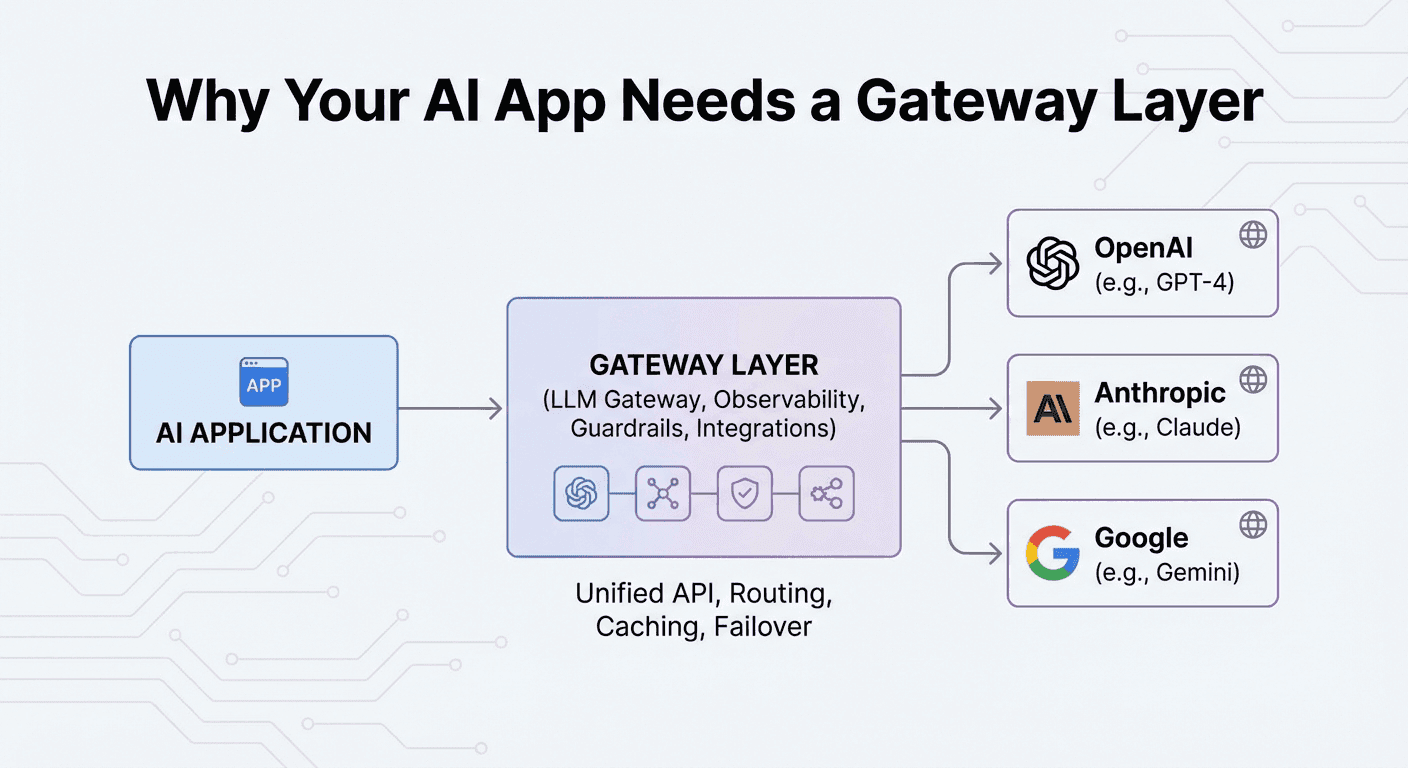

Why building directly against a single LLM provider's API is riskier than you think, and how a gateway layer protects your AI investment.

What an LLM gateway does, why it matters, and how it lets you ship AI features faster by abstracting away provider complexity.

Learn why simple LLM proxies aren't enough and how a unified AI gateway delivers centralized access control, cost visibility, compliance, and security.

Connect your internal LLM deployments or any OpenAI-compatible API to LangRouter—and get the same analytics, caching, and routing.